The ECGI blog is kindly supported by

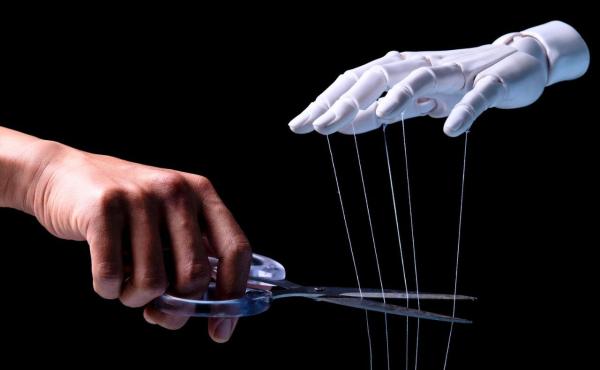

AI in the Boardroom: Why Smarter CEOs May Mean Weaker Governance

What if AI makes CEOs more capable but less accountable? The conventional wisdom about new technologies in business goes something like this: new technologies make people more productive, more productive people make better decisions, and better decisions are good for everyone. It is a comforting story. It is also incomplete.

In a new paper with Jin Li from HKU Business School, we argue that when CEOs adopt AI, the consequences for corporate governance can be surprisingly perverse. AI makes CEOs less dependent on their boards. That sounds like empowerment. But it also disrupts the information flows that hold the CEO–board relationship together, leaving firms with more entrenched CEOs, weaker monitoring, and governance structures that may be worse for shareholders.

Here is the mechanism. Start with a CEO who identifies a potential deal or strategic opportunity. She must then assess it. If she cannot assess it on her own, she can ask the board for help. But asking for help is costly: it signals that the CEO may not be very good at her job, which increases the chance that the board will replace her.

This creates a tension. The board wants the CEO to share information. The CEO wants to protect her job. To induce honest communication, the firm must commit to a board that is somewhat “CEO-friendly”—one that does not always use what the CEO says against her. Some degree of CEO entrenchment is the price firms pay for information. This logic—that “friendly boards” can be good for shareholders—is one I have explored in earlier work with Renée Adams.

Now introduce AI. In our model, AI has two distinct capabilities. First, it can independently solve some problems that the CEO cannot, acting as a coworker. Second, it can make the CEO better at solving problems on her own, acting as a tool that augments her skills.

A simple example helps. Imagine a CEO evaluating a possible acquisition. In the past, she might have relied on the board’s experience to think through strategic fit, execution risk, or downside scenarios. With AI tools producing rapid summaries, scenarios, and comparisons, she may feel less need to involve directors early—reducing both the board’s input and the board’s visibility into how the decision is being made.

Both AI capabilities reduce the CEO’s incentive to consult the board. If AI solves problems the CEO previously needed help with, why ask the board at all? And if AI makes the CEO’s job easier, the firm can pay her less—but lower pay also weakens her incentive to share information with directors.

The result is the same in both cases: if the firm wants to preserve the CEO’s willingness to communicate, it must make the board even more CEO-friendly. Monitoring intensity falls; CEO entrenchment rises. But the CEO is no better off, because the lower risk of dismissal is exactly offset by lower pay.

Whether AI helps or hurts overall depends on what it mainly does inside the firm. When AI acts as a coworker—solving problems the board would otherwise have helped with—it displaces valuable board input without improving information flows, and that destroys value. When AI acts as a tool that makes the CEO more capable, the direct productivity gains can outweigh the governance cost. But the governance distortion is present either way.

This points to a broader paradox. We are used to thinking of information technology as a way to reduce transaction costs. AI gives firms better data, faster analysis, and sharper predictions. But our model shows that AI can increase a different kind of cost: the cost of getting an agent to communicate truthfully.

The key insight is that AI changes not just what the CEO does, but what she can get away with. It raises the value of going it alone. And that temptation is what makes open communication more difficult, forcing firms to weaken monitoring in order to keep information flowing.

This is not just a theoretical curiosity. Recent survey evidence shows that AI adoption by top executives increased by more than 50% in 2025, and that CEOs are more frequent users of AI than other executives. As AI capabilities continue to improve, these incentive effects are likely to become more pronounced.

Can boards fight back? We also consider what happens when boards adopt AI in their advisory and monitoring roles. Board AI adoption does help: it attenuates some of the negative effects on monitoring intensity and pay. But here is the catch. To fully reverse the damage, technological progress must be significantly biased toward the board. AI must enhance directors’ capabilities more than CEOs’.

That seems unlikely. CEOs interact with AI daily. They use it for strategic planning, deal assessment, and idea generation. Most independent directors serve part-time. If anything, the natural asymmetry favors CEOs. The prediction, then, is clear: on balance, AI adoption should reduce board monitoring and CEO pay.

AI is transforming how executives make decisions. That transformation is mostly beneficial. But it has a side effect that firms should take seriously: it weakens the governance mechanisms that keep CEOs accountable. The solution is not to resist AI adoption. It is to recognize that governance structures designed for the pre-AI era need updating. Boards, compensation committees, and shareholders must understand that when CEOs gain powerful new analytical tools, the old equilibrium breaks down. Restoring it requires deliberate adjustments to monitoring, compensation, and the flow of information between executives and directors. Organizations that fail to make these adjustments may discover that their AI investments, far from improving decision-making, have quietly worsened their governance.

_____________

Daniel Ferreira is a Professor of Finance at the London School of Economics, a CERP Research Fellow, and an ECGI Fellow and Research Member.

This blog is based on a paper presented at the 2026 Corporate Governance Symposium and John L. Weinberg/IRRCi Research Paper Award Competition on 6th March 2026. Visit the event page to explore more conference-related blogs.

The ECGI does not, consistent with its constitutional purpose, have a view or opinion. If you wish to respond to this article, you can submit a blog article or 'letter to the editor' by clicking here.