The ECGI blog is kindly supported by

Algorithmic Incompetence: The Fiduciary Duty Your Board Is Already Breaching

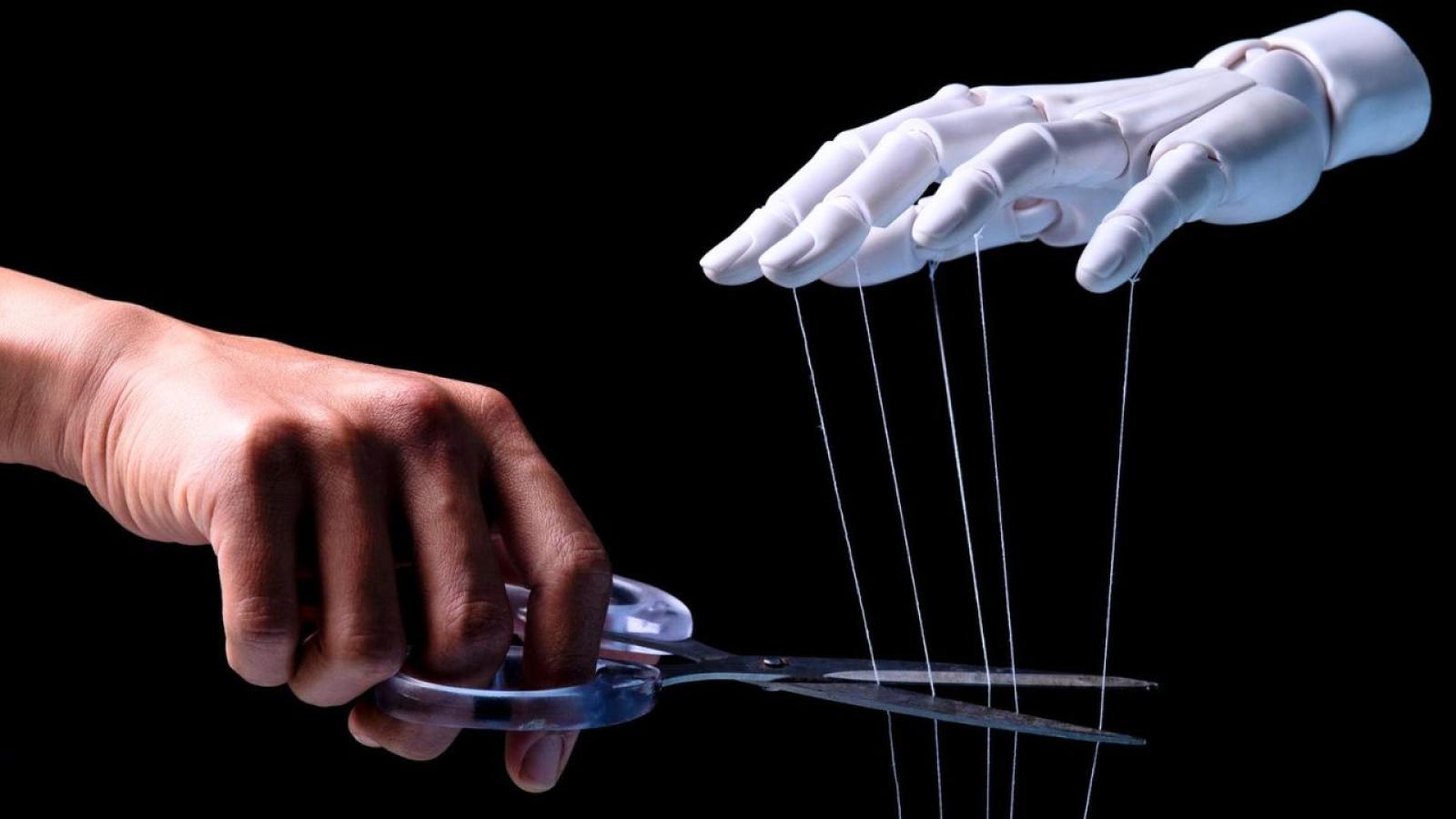

A pillow in the wrong hands suffocates; in the right hands, it supports. Roberto Cingolani's metaphor captures what corporate law has always known: responsibility lies not with the instrument but with whoever adopts it without understanding its implications.

In boardrooms across Europe and North America, a quiet abdication is underway. Boards are adopting algorithmic systems they do not understand, delegating comprehension to opaque technologies, and assuming that regulatory grace periods exempt them from thinking. They are wrong. The duty to understand what you govern is not a novelty of the AI Act — it is an ancient obligation that artificial intelligence now renders inescapable.

The law already speaks

The principles underlying algorithmic governance trace back to Roman mandatum: whoever acts on behalf of another without understanding what they govern does not perform — they let things run. The medieval Court of Chancery built fiduciary duty on the same premise. Article 2392 of the Italian Civil Code, Section 93 of the German Aktiengesetz, and Section 174 of the UK Companies Act 2006 are contemporary translations of one principle: technological complexity must not become a shield for human irresponsibility.

Courts are not waiting for the AI Act to enforce this. In February 2023, Germany's Federal Constitutional Court declared unconstitutional the use of the Palantir "HessenDATA" platform without substantive human oversight. In Italy, the Tribunal of Siracusa (No. 338/2026) held that uncritical reliance on AI-generated legal citations, constitutes professional negligence. In France, six rulings between December 2025 and February 2026 established that professionals who delegate judgment to algorithmic systems bear full liability for the outputs.

The message is consistent: whoever exercises a function affecting third parties cannot delegate judgment to a system they neither understand nor supervise.

From incompetence to complicity

The spectrum of board failure runs from ignorance to wilful blindness. The Delaware Caremark doctrine holds that a board breaches its fiduciary duty when it fails entirely to institute a reporting system on critical risks, or consciously ignores the warnings such a system produces. The Boeing 737 MAX settlement of 237.5 million dollars was levied against directors who possessed no engineering expertise but had the duty to oversee a mission-critical system.

On 25 March 2026, a Los Angeles jury took this further. In K.G.M. v. Meta Platforms, the first jury verdict finding social media platforms liable for negligent design, Meta was held 70% responsible and YouTube 30% for harm caused to a young user by addictive algorithmic features. Internal documents showed executives knew their algorithms harmed adolescents — an Instagram employee described the company as "basically pushers" — and chose not to act.

The Boeing board did not understand. The Meta board understood perfectly and used that understanding to increase profit at the expense of minors. We move from algorithmic incompetence to algorithmic competence exercised with wilful misconduct — fiduciary abdication in its gravest form.

The governance gap is measurable

The international framework now offers convergent tools to assess board diligence. The NIST AI Risk Management Framework articulates algorithmic risk management into four auditable functions. ISO/IEC 42001:2023 transforms these into certifiable requirements. The ICGN Guidance on Artificial Intelligence (2024) translates technical consensus into investor expectations: 58% of organisations lack AI competencies on the board, 80% have no audit process for algorithmic systems, and 86% use AI without board awareness.

These instruments do not create new obligations. They render measurable obligations that already exist under Article 2086 of the Italian Civil Code, the duty to act on an informed basis, and the qualified diligence standard that every major jurisdiction imposes on directors. A board that documents its deliberative process regarding AI adoption, supervision and provider assessment will fall within the Business Judgment Rule. A board that does not has already abdicated.

The ancient duty made inescapable

Dario Amodei, CEO of Anthropic, has defined the current phase as the "adolescence of technology": transformative capacity without the institutional maturity to govern it. I’ve analyzed the situation across several markets (EU, Asia, and the US) not as an academic exercise, but to show that current company law already has what it takes. We don't need to wait for new legislation; the practical instruments are already there for us to use.

The duty — enterprise by enterprise, resolution by resolution — is already written in the law we have inherited. The question is not whether boards will be held accountable for algorithmic incompetence. The question is whether they will understand this before the courts remind them.

__________

Domenica Lista is Corporate Affairs, Governance Officer and Board Secretary at Leonardo S.p.A., co-founder of AIRIA, and an ECGI Practitioner member. The views expressed are solely her own.

The ECGI does not, consistent with its constitutional purpose, have a view or opinion. If you wish to respond to this article, you can submit a blog article or 'letter to the editor' by clicking here.