The ECGI blog is kindly supported by

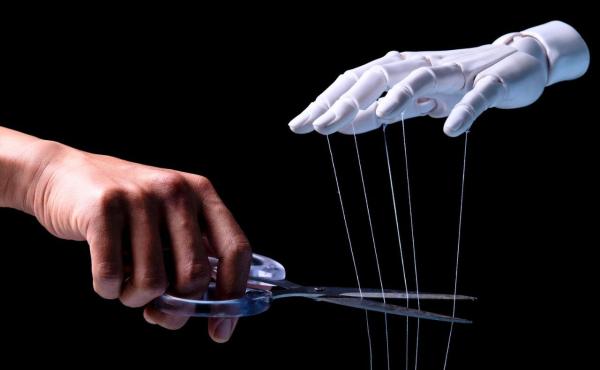

AI Directors and the Independence Illusion

Corporate governance does not have an independence problem. It has an honesty problem.

For decades, the law has insisted that boards can be both socially legitimate and genuinely independent—that directors can owe their seats to management and still monitor them without bias. This insistence has produced a litany of independence standards, from NYSE listing requirements to Sarbanes-Oxley’s audit committee mandates. The search has been relentless. The reason it has failed is structural: genuine independence is incompatible with the social architecture of the boardroom itself.

Even directors who satisfy every technical criterion of independence remain embedded in elite social networks that compromise their judgment. As Victor Brudney observed, independent directors are rarely appointed “without at least the prior approval of management.” This creates a selection bias that no disclosure requirement can cure; selection itself creates dependence. The result is a system where “independence” has become a fiction: legally certified but sociologically impossible. This embeddedness is a feature, not a bug, of how managerial capitalism stabilizes itself.

Consider the Block-Tidal acquisition, where Jack Dorsey’s board approved a $300 million purchase of a financially distressed music streaming company owned by his friend, Jay-Z. The court dismissed the shareholder suit while admitting the deal was “by all accounts, a terrible business decision.” The court’s reasoning was doctrinally sound: mere friendship does not constitute a disabling conflict under Delaware law. But that is precisely the point. The doctrine renders itself toothless against the most common form of boardroom bias—the natural tendency to defer to people we like, respect, and consider friends.

The doctrinal record tells a less coherent story than the formal standard suggests. In Sandys v. Pincus, the Delaware Supreme Court reversed a lower court dismissal on a single fact: two directors co-owned a private airplane with the interested party. The majority inferred an “extremely close, personal bond” capable of impairing judgment—a conclusion the dissent found unsupported by sparse allegations. Yet Beam v. Stewart had already established that a “thin social-circle friendship” does not compromise independence—a baseline Dorsey applied to wave through a $300 million acquisition. The common thread is not doctrinal consistency but judicial discretion. That discretion is not incidental to the independence framework. It is load-bearing.

A recent proposal makes this contradiction impossible to ignore. Zhaoyi Li’s “Artificial Fiduciaries” argues that AI systems should serve as independent directors with voting rights. The logic is almost tautological: genuine independence requires the absence of relational capacity, and only non-human actors can satisfy that condition. Unlike human directors, AI systems have no social networks to compromise their judgment, no career incentives to please management, and no emotional attachments that might cloud their analysis.

The obvious objection is that AI reflects the biases of its designers. But the question is not whether AI directors would be perfect, but whether they would be better than the status quo. Human directors carry biases they cannot articulate, operating through intuitions shaped by decades of social conditioning. AI systems make decisions through processes that can be audited, tested, and improved. We can patch an algorithm. We cannot patch a friendship.

The deeper resistance to the artificial fiduciary is unlikely to be technical. The threat AI directors pose is not that they might fail to satisfy independence standards—it is that they satisfy them too well, exposing the fragility of a construct that was never meant to be taken seriously. If a machine can fulfill the independence ideal more faithfully than any human, then the legal insistence on “natural persons” starts to look less like a safeguard and more like a defensive shield around a socially embedded elite.

This forces a choice that corporate governance has spent fifty years carefully avoiding. Either the law actually values independence—in which case artificial fiduciaries may be preferable to humans—or it prefers socially embedded directors who reproduce corporate dynamics through trust and cohesion. If the latter, independence should be abandoned as a regulatory North Star, and the law should admit that boards are designed for calibrated partiality, not neutrality.

AI directors do not solve this contradiction. They reveal it. And that is precisely why they will be resisted.

______________

Jack Resnick is a second-year J.D. candidate at Stanford Law School (Class of 2027).

The ECGI does not, consistent with its constitutional purpose, have a view or opinion. If you wish to respond to this article, you can submit a blog article or 'letter to the editor' by clicking here.